CV GAN模型常用的一些东西

转自AI Studio,原文链接:CV GAN模型常用的一些东西,面向新人 - 飞桨AI Studio也算一次经验的总结分享了,我会把我的一些思考和trick在本章分享,希望大家有所收获,若有错误请指出啊。1. 使用VGG的感知损失(perceptual loss)这个损失在风格迁移中使用很多,大家如果刚开始做风格迁移任务一般也很难拥有属于自己的感知损失,这里我就替大家写好了,拿走不谢这里我举一个

转自AI Studio,原文链接:CV GAN模型常用的一些东西,面向新人 - 飞桨AI Studio

也算一次经验的总结分享了,我会把我的一些思考和trick在本章分享,希望大家有所收获,若有错误请指出啊。

1. 使用VGG的感知损失(perceptual loss)

这个损失在风格迁移中使用很多,大家如果刚开始做风格迁移任务一般也很难拥有属于自己的感知损失,这里我就替大家写好了,拿走不谢

这里我举一个例子谷歌的实时风格迁移算法,确定不想进来看看?我只能说精彩简陋版复现在这个项目中我用了。

import numpy as np

import paddle

import paddle.optimizer

import paddle.nn as nn

import paddle.nn.functional as F

class VGG19(nn.Layer):

cfg = [

64, 64, 'M', 128, 128, 'M', 256, 256, 256, 256, 'M', 512, 512, 512, 512,'M', 512, 512, 512, 512, 'M']

def __init__(self, output_index: int = 30) -> None:

super().__init__()

# arch = 'caffevgg19'

# weights_path = get_path_from_url(model_urls[arch][0],

# model_urls[arch][1])

data_dict: dict = np.load("./VGG/vgg19_no_fc.npy",

encoding='latin1',

allow_pickle=True).item()

self.features = self.make_layers(self.cfg, data_dict)

del data_dict

self.features = nn.Sequential(*self.features.sublayers()[:output_index])

mean = paddle.to_tensor([103.939, 116.779, 123.68])

self.mean = mean.unsqueeze(0).unsqueeze(-1).unsqueeze(-1)

def _process(self, x):

rgb = (x * 0.5 + 0.5) * 255 # value to 255

bgr = paddle.stack((rgb[:, 2, :, :], rgb[:, 1, :, :], rgb[:, 0, :, :]),

1) # rgb to bgr

return bgr - self.mean # vgg norm

def _forward_impl(self, x):

x = self._process(x)

# NOTE get output with out relu activation

features_list =[]

# print("features.sub",self.features.sublayers())

for layer in self.features.sublayers():

x =layer(x)

if isinstance(layer,nn.ReLU):

features_list.append(x)

# features_list.append(x)

# print(len(features_list[-5:]))

# x = self.features(x)

return features_list

def forward(self, x):

all_features = self._forward_impl(x)

features_list = []

features_indexs = [0,2,4,8,12]

for d in features_indexs:

features_list.append(all_features[d])

return features_list

@staticmethod

def get_conv_filter(data_dict, name):

return data_dict[name][0]

@staticmethod

def get_bias(data_dict, name):

return data_dict[name][1]

@staticmethod

def get_fc_weight(data_dict, name):

return data_dict[name][0]

def make_layers(self, cfg, data_dict, batch_norm=False) -> nn.Sequential:

layers = []

in_channels = 3

block = 1

number = 1

for v in cfg:

if v == 'M':

layers += [nn.MaxPool2D(kernel_size=2, stride=2)]

block += 1

number = 1

# print("M",len(layers))

else:

conv2d = nn.Conv2D(in_channels, v, kernel_size=3, padding=1)

""" set value """

weight = paddle.to_tensor(

self.get_conv_filter(data_dict, f'conv{block}_{number}'))

weight = weight.transpose((3, 2, 0, 1))

bias = paddle.to_tensor(

self.get_bias(data_dict, f'conv{block}_{number}'))

conv2d.weight.set_value(weight)

conv2d.bias.set_value(bias)

number += 1

if batch_norm:

layers += [conv2d, nn.BatchNorm2D(v), nn.ReLU()]

else:

layers += [conv2d, nn.ReLU()]

in_channels = v

# print("number",block)

return nn.Sequential(*layers)

In [1]

'''

该代码块是VGG19去除最后几层全连接还有卷积得到的特征提取器 的使用案例

好久以前基于pytorch改成paddle的,不要问我代码源头,我自己也忘了github哪个大神的,在这里做成好用的轮子,好用就行了别管那么多

使用格式:代码在VGG_Model.py中,然后参数文件npy存在VGG文件夹中

输入tensor [N,C,H,W] 通道顺序RGB 数值区间[-1,1]

return 最后的特征feature,和逐层特征组成的list(每次relu后的特征图加最后的conv特征图)[:-5] 说白了就是返回一个tensor和list

PS:想改的话就自己改

'''

from VGG_Model import VGG19

import numpy as np

import paddle

m = np.random.random([1, 3,256,256])

real_image = paddle.to_tensor(m,dtype="float32")

features_list = []

'''

这是我常用的算感知损失各个特征图损失的比例

'''

rates = [1.0 / 32, 1.0 / 16, 1.0 / 8, 1.0 / 4, 1.0]

all_features = VGG19()(real_image)

# print(len(all_features))

# for i in all_features:

# print(i.shape)

print("------------------------------")

for i in all_features:

# print(all_features[d].shape)

print(i.shape)

print(len(all_features))

------------------------------ [1, 64, 256, 256] [1, 128, 128, 128] [1, 256, 64, 64] [1, 512, 32, 32] [1, 512, 16, 16] 5

2. 来自SNGAN的谱归一化

论文名称:Spectral Normalization for Generative Adversarial Networks

这个就是为了让GAN生成对抗损失下降更加稳定,然后谱归一化是用在判别器参数上的,这是个很好用的GAN trick.目的是让判别器满足Lipschitz约束。

这个代码第一次认识到SN(Spectral Normalization)来自于FutureSI的SPADE项目,我数学暂时不行,然后具体原理论文我没看过啊。

下方代码块写了具体应用案例

In [4]

'''

该代码块代表谱归一化示例,判别器用到了这个,可以参考一下

'''

from Normal import build_norm_layer

import paddle.nn as nn

import paddle

SpectralNorm = build_norm_layer('spectral')

import numpy as np

input_nc = 3

x = np.random.uniform(-1, 1, [4, 3, 256, 256]).astype('float32')

x = paddle.to_tensor(x)

conv = SpectralNorm(nn.Conv2D(3, 5, 3, 2, 1,

weight_attr=None,

bias_attr=None))

conv(x).shape[4, 5, 128, 128]

3. 判别器架构

这里提供几种判别器的架构,大家可以自己拿去尝试

3.1 MultiscaleDiscriminator

这个判别器,把中间的特征也输出了,进行一个特征对齐,方便进行Feature Match Loss,进行一个特征匹配.

我在衣服生成项目用过这个判别器

import numpy as np

# import os

import paddle

import paddle.optimizer

import paddle.nn as nn

# from tqdm import tqdm

# from paddle.io import Dataset

# from paddle.io import DataLoader

# import paddle.tensor as tensor

from Normal import build_norm_layer

class D_OPT():

'''

opt格式

'''

def __init__(self):

super(D_OPT, self).__init__()

self.ndf = 64

self.n_layers_D = 4

self.num_D = 2

# 当使用谱归一化时,手动设置卷积层的初始值(由于参数名称的改变,weights_init无法正常工作)

spn_conv_init_weight = nn.initializer.Constant(value=2e-2)

spn_conv_init_bias = nn.initializer.Constant(value=.0)

# spn_conv_init_weight = None

# spn_conv_init_bias = None

class NLayersDiscriminator(nn.Layer):

def __init__(self, opt):

super(NLayersDiscriminator, self).__init__()

kw =3

padw = int(np.ceil((kw - 1.0) / 2))

nf = opt.ndf#64

input_nc =3

layer_count = 0

layer = nn.Sequential(

nn.Conv2D(input_nc, nf, kw, 2, padw),

nn.LeakyReLU(0.2)

)

self.add_sublayer('block_'+str(layer_count), layer)

layer_count += 1

# feat_size_prev = np.floor((opt.crop_size + padw * 2 - (kw - 2)) / 2).astype('int64')

SpectralNorm = build_norm_layer('spectral')

InstanceNorm = build_norm_layer('instance')

for n in range(1, opt.n_layers_D):

nf_prev = nf

nf = min(nf * 2, 512)

stride = 1 if n == opt.n_layers_D - 1 else 2

layer = nn.Sequential(

# nn.Conv2D(nf_prev, nf, kw, stride, padw,

# weight_attr=spn_conv_init_weight,

# bias_attr=spn_conv_init_bias),

SpectralNorm(nn.Conv2D(nf_prev, nf, kw, stride, padw,

weight_attr=spn_conv_init_weight,

bias_attr=spn_conv_init_bias)),

InstanceNorm(nf),

nn.LeakyReLU(.2)

)

self.add_sublayer('block_'+str(layer_count), layer)

layer_count += 1

layer = nn.Conv2D(nf, 1, kw, 1, padw)

self.add_sublayer('block_'+str(layer_count), layer)

layer_count += 1

def forward(self, input):

# print("NLayersDiscriminator input shape",input.shape)

output = []

for layer in self._sub_layers.values():

output.append(layer(input))

input = output[-1]

return output

class MultiscaleDiscriminator(nn.Layer):

def __init__(self, opt):

super(MultiscaleDiscriminator, self).__init__()

for i in range(opt.num_D):#num_D =2

sequence = []

for j in range(i):

sequence += [nn.AvgPool2D(3, 2, 1)]

sequence += [NLayersDiscriminator(opt)]

sequence = nn.Sequential(*sequence)

self.add_sublayer('nld_'+str(i), sequence)

def forward(self, input):

output = []

for layer in self._sub_layers.values():

output.append(layer(input))

return output

def build_m_discriminator():

return MultiscaleDiscriminator(D_OPT())

if __name__ =="__main__":

opt = D_OPT()

md = MultiscaleDiscriminator(opt)

md = build_m_discriminator()

np.random.seed(15)

# nld = NLayersDiscriminator(opt)

# input_nc = nld.compute_D_input_nc(opt)

input_nc = 3

x = np.random.uniform(-1, 1, [4, 3, 256, 256]).astype('float32')

x = paddle.to_tensor(x)

print("input tensor x.shape",x.shape)\

y = md(x)

for i in range(len(y)):

for j in range(len(y[i])):

print(i, j, y[i][j].shape)

print('--------------------------------------')

下方代码块就是具体封装好的使用方法

In [ ]

# !python -u Discriminator.py

'''

该代码块代表多尺度判别器示例

'''

from Discriminator import build_m_discriminator

import numpy as np

md = build_m_discriminator()

input_nc = 3

x = np.random.uniform(-1, 1, [4, 3, 256, 256]).astype('float32')

x = paddle.to_tensor(x)

print("input tensor x.shape",x.shape)\

y = md(x)

for i in range(len(y)):

for j in range(len(y[i])):

print(i, j, y[i][j].shape)

print('--------------------------------------')3.2 animegan我使用的判别器

import paddle.nn as nn

import paddle.nn.functional as F

import paddle.tensor as tensor

import paddle

class AnimeDiscriminator(nn.Layer):

def __init__(self, channel: int = 64, nblocks: int = 3) -> None:

super().__init__()

channel = channel // 2

last_channel = channel

f = [

nn.Conv2D(3, channel, 3, stride=1, padding=1, bias_attr=False),

nn.LeakyReLU(0.2)

]

in_h = 256

for i in range(1, nblocks):

f.extend([

nn.Conv2D(last_channel,

channel * 2,

3,

stride=2,

padding=1,

bias_attr=False),

nn.LeakyReLU(0.2),

nn.Conv2D(channel * 2,

channel * 4,

3,

stride=1,

padding=1,

bias_attr=False),

nn.GroupNorm(1, channel * 4),

nn.LeakyReLU(0.2)

])

last_channel = channel * 4

channel = channel * 2

in_h = in_h // 2

self.body = nn.Sequential(*f)

self.head = nn.Sequential(*[

nn.Conv2D(last_channel,

channel * 2,

3,

stride=1,

padding=1,

bias_attr=False),

nn.GroupNorm(1, channel * 2),

nn.LeakyReLU(0.2),

nn.Conv2D(

channel * 2, 1, 3, stride=1, padding=1, bias_attr=False)

])

def forward(self, x):

x = self.body(x)

x = self.head(x)

return x

3.3 我线稿上色(SCFT)使用的LSGAN判别器

# LSGAN Discriminator

class Discriminator(nn.Layer):

def __init__(self, ndf, nChannels):

super(Discriminator, self).__init__()

# input : (batch * nChannels * image width * image height)

# Discriminator will be consisted with a series of convolution networks

self.layer1 = nn.Sequential(

# Input size : input image with dimension (nChannels)*64*64

# Output size: output feature vector with (ndf)*32*32

nn.Conv2D(

in_channels = nChannels,

out_channels = ndf,

kernel_size = 4,

stride = 2,

padding = 1,

bias_attr = False

),

nn.BatchNorm2D(ndf),

nn.LeakyReLU(0.2)

)

self.layer2 = nn.Sequential(

# Input size : input feature vector with (ndf)*32*32

# Output size: output feature vector with (ndf*2)*16*16

nn.Conv2D(

in_channels = ndf,

out_channels = ndf*2,

kernel_size = 4,

stride = 2,

padding = 1,

bias_attr = False

),

nn.BatchNorm2D(ndf*2),

nn.LeakyReLU(0.2)

)

self.layer3 = nn.Sequential(

# Input size : input feature vector with (ndf*2)*16*16

# Output size: output feature vector with (ndf*4)*8*8

nn.Conv2D(

in_channels = ndf*2,

out_channels = ndf*4,

kernel_size = 4,

stride = 2,

padding = 1,

bias_attr = False

),

nn.BatchNorm2D(ndf*4),

nn.LeakyReLU(0.2)

)

self.layer4 = nn.Sequential(

# Input size : input feature vector with (ndf*4)*8*8

# Output size: output feature vector with (ndf*8)*4*4

nn.Conv2D(

in_channels = ndf*4,

out_channels = ndf*8,

kernel_size = 4,

stride = 2,

padding = 1,

bias_attr = False

),

nn.BatchNorm2D(ndf*8),

nn.LeakyReLU(0.2)

)

self.layer5 = nn.Sequential(

# Input size : input feature vector with (ndf*8)*4*4

# Output size: output probability of fake/real image

nn.Conv2D(

in_channels = ndf*8,

out_channels = 1,

kernel_size = 4,

stride = 1,

padding = 0,

bias_attr = False

),

# nn.Sigmoid() -- Replaced with Least Square Loss

)

def forward(self, x):

out = self.layer1(x)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = self.layer5(out)

return out

x = paddle.randn([4,3,256,256])

Discriminator(64,3)(x).shape

4. 模型初始化探讨

我们一般采取随机初始化策略,这是最为常见的方式,这可以打破模型参数的对称性,让模型可训练。如果模型参数都为全都初始化为同样的数值,那么反向传播计算梯度的时候,获得的梯度是相同的,这样就不合理了。 但是随机初始化参数也是有问题的,如果选择的随机分布不恰当,就会导致训练时模型优化陷入困境,具体而言,就是当神经网络结构更加复杂后,随机初始化参数方法会让复杂的神经网络结构后几层输出都接近为0,导致难以获得有效梯度,让模型训练陷入困境。

为了避免这个问题,提出了Xavier Initialization方法,该初始化参数的方法并不复杂,基本思路是保障输入输出的方差一致,这可以避免复杂模型输出后几层趋向于0,具体的做法就是将随机初始化的参数乘以缩放因子sqrt(1/layers_input_dims).但是当激活函数使用RELU的时候就会出现问题,随着训练的进行,后几层输出趋向于0,He Initializer可以解决这个问题。

He Initializer 方法基本思想是,假设ReLU网络每一层有一半的神经元被激活,另一半为0,为了保证输入 与输出的方差一致,需要在Xavier Initialization方法的基本上除以2.具体的做法就是将随机初始化的参数乘以缩放因子sqrt(2/layers_input_dims).

该截图来自于CSDN博客

下方代码是来自QQ群中看张牙舞爪大佬的分享,给他点个关注。

In [13]

import paddle

import paddle.nn as nn

from paddle.nn.initializer import KaimingNormal,Constant

def weight_init(module):

for n,m in module.named_children():

print("initialize:"+n)

if isinstance(m,nn.Conv2D):

KaimingNormal()(m.weight,m.weight.block)

if m.bias is not None:

Constant(0.0)(m.bias)

elif isinstance(m,nn.Conv1D):

KaimingNormal()(m.weight,m.weight.block)

if m.bias is not None:

Constant(0.0)(m.bias)

elif isinstance(m,(nn.BatchNorm2D,nn.InstanceNorm2D)):

Constant(1.0)(m.weight)

Constant(3000.0)(m._variance)

Constant(3000.0)(m._mean)

if m.bias is not None:

Constant(0.0)(m.bias)

elif isinstance(m,nn.Linear):

KaimingNormal()(m.weight,m.weight.block)

if m.bias is not None:

Constant(0.0)(m.bias)

else:

pass

class Net(nn.Layer):

def __init__(self):

super(Net,self).__init__()

self.conv = nn.Conv2D(3,5,3)

self.bn = nn.BatchNorm2D(5,5)

self.ac = nn.ReLU()

def forward(self,x):

x = self.conv(x)

x = self.bn(x)

x = self.ac(x)

return x

net =Net()

x = paddle.randn([4,3,256,256])

net(x).shape

weight_init(net)

print(net.conv.weight.shape)initialize:conv initialize:bn initialize:ac [5, 3, 3, 3]

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/nn/layer/norm.py:653: UserWarning: When training, we now always track global mean and variance. "When training, we now always track global mean and variance.")

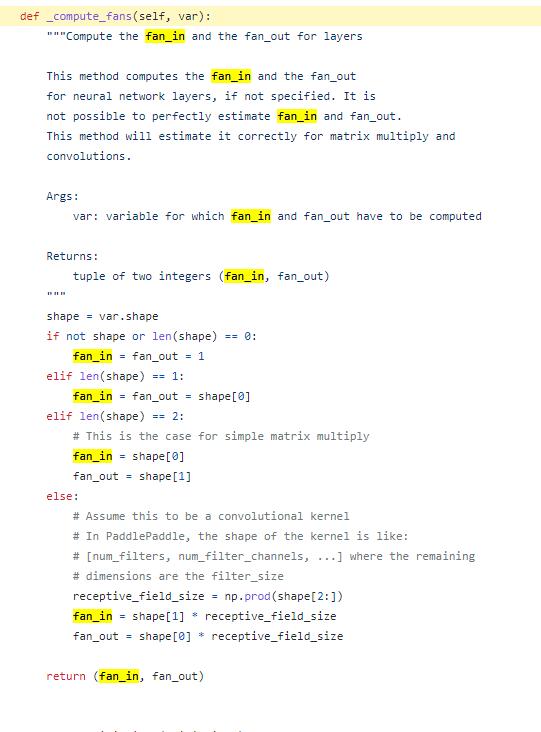

这里我为 以下截图来自于paddle源码,这里方便大家真正弄懂fan_in具体内容

KaimingNormal继承于MSRAInitializer,然后MSRAInitializer的f_in 为self._compute_fans(var)得到的。var就是conv2d里面的卷积核weight

所以按照计算,fan_in为3*3*3等于27

更多推荐

已为社区贡献1438条内容

已为社区贡献1438条内容

所有评论(0)